Table of Contents

The Science Behind Gamified Assessments: Why Game-Based Evaluation Predicts Job Performance Better Than Interviews

- March 3, 2026

- Smita Dinesh

- 2:27 pm

Here is a question that separates organizations that hire well from those that hire expensively: does your primary candidate evaluation method actually predict job performance? For most Indian organizations, the honest answer is uncomfortable. The unstructured interview, which remains the dominant hiring tool across Indian industries, explains only 14 to 18% of the variance in subsequent job performance according to decades of industrial-organizational psychology research. That means over 80% of what determines whether someone will succeed or struggle in a role is invisible to the interviewer sitting across the table, no matter how experienced, how intuitive, or how many years they have spent evaluating candidates.

Yet interviews persist as the cornerstone of hiring. Why? Because they feel informative. The human brain is exceptionally skilled at constructing coherent narratives from limited data. A firm handshake, a confident opening, a prestigious alma mater, a well-told success story, and the interviewer’s brain builds a compelling case: this person will be outstanding. The problem is that the case is constructed from cognitive biases rather than predictive evidence. Confirmation bias, halo effect, similarity attraction, first-impression anchoring: the list of documented interview biases runs into the dozens, each one systematically distorting the evaluation without the interviewer’s conscious awareness. Worse, interviewer confidence bears almost no statistical relationship to interviewer accuracy. The most confident evaluations are often the least reliable.

Gamified assessments represent a fundamentally different paradigm for talent evaluation. Rather than asking candidates to describe their capabilities, which is what interviews and traditional questionnaires do, gamified assessments observe what candidates actually do in carefully designed interactive scenarios. The behavioural signals captured during gameplay are implicit, meaning they emerge naturally from how the candidate approaches the task, not from how the candidate chooses to present themselves. The science behind this paradigm shift is robust, grounded in cognitive psychology, psychometric theory, behavioural economics, and two decades of research into game-based measurement. The results are striking: well-designed gamified assessments consistently demonstrate higher predictive validity for job performance than unstructured interviews, traditional personality questionnaires, and most other commonly used hiring methods.

This article examines the scientific foundations of gamified assessments, explains why they predict job performance better than conventional methods, and provides Indian talent acquisition leaders and HR analytics teams with the evidence needed to make informed decisions about integrating game-based evaluation into their hiring and talent management processes.

The Predictive Validity Hierarchy: What Decades of Research Show About Common Hiring Methods

Predictive validity is the statistical measure of how well an assessment method predicts future job performance. It is expressed as a correlation coefficient ranging from 0 (no prediction at all) to 1 (perfect prediction). In the real world of personnel selection, validity coefficients above 0.30 are considered strong, and anything above 0.50 is exceptional. No single assessment method achieves perfect prediction, but the differences between methods are large enough to have enormous practical consequences for hiring quality and organizational performance.

The most comprehensive meta-analyses in industrial-organizational psychology, synthesizing data from thousands of studies across millions of candidates over several decades, have established a clear hierarchy of assessment method validity. Understanding this hierarchy is essential before evaluating where gamified assessments fit and why they represent a significant advancement in assessment science.

Comparison: Predictive Validity of Common Hiring Methods

Assessment Method | Validity | What It Measures | Key Limitations |

Unstructured Interviews | 0.20 to 0.38 | Self-presentation, verbal fluency, social skills | Highly susceptible to interviewer bias, faking, similarity attraction; poor inter-rater reliability across interviewers |

Structured Interviews | 0.44 to 0.51 | Job-relevant behaviours (when well-designed with validated rubrics) | Still relies on self-report; requires extensive interviewer training and calibration; time-intensive to scale |

Traditional Personality Questionnaires | 0.22 to 0.33 | Self-reported personality traits across standard dimensions | Highly fakeable; measures what candidates say about themselves, not what they actually do; social desirability bias |

Cognitive Ability Tests | 0.51 to 0.65 | General mental ability, abstract reasoning, problem-solving speed | Strong validity but adverse impact concerns across demographic groups; measures cognitive capacity, not behavioural tendency |

Traditional Assessment Centres | 0.36 to 0.45 | Multiple competencies through role-plays, simulations, group exercises | Expensive (INR 8,000 to 25,000+ per candidate), time-consuming (4 to 8 hours), limited scalability; trained assessor availability |

Work Sample Tests | 0.54 to 0.60 | Actual job task performance in controlled conditions | Limited to roles with easily testable tasks; cannot assess leadership, strategic thinking, or interpersonal dynamics |

Gamified Assessments (Well-Designed) | 0.40 to 0.60+ | Implicit behavioural patterns, cognitive processes, decision-making under dynamic real-time conditions | Requires rigorous psychometric design and ongoing validation; quality varies significantly between providers |

The data reveals a striking pattern that should concern every talent acquisition leader in India. The most widely used method, the unstructured interview, has the weakest predictive power. The methods with the strongest predictive power, cognitive ability tests and work sample tests, are either limited in what they measure (cognitive capacity alone does not predict behaviour) or impractical to scale (you cannot create a work sample test for a strategic leadership role). Traditional assessment centres combine multiple methods and achieve moderate validity, but at costs and time investments that make them impractical for all but the most senior roles.

Gamified assessments occupy a uniquely powerful position in this hierarchy. They combine strong predictive validity (0.40 to 0.60+) with scalability (digital delivery to thousands simultaneously), breadth of measurement (behavioural patterns, cognitive processes, interpersonal dynamics, emotional regulation), and candidate engagement that other high-validity methods cannot match. Understanding why requires examining the science behind how gamified assessments work.

The Four Scientific Principles That Make Gamified Assessments Work

Well-designed gamified assessments are not traditional psychometric tests with colourful game graphics painted on top. That superficial approach, sometimes called “chocolate-covered broccoli” in assessment science circles, adds engagement without changing what is actually measured. Genuinely scientific gamified assessments are built on four principles from cognitive psychology and psychometric theory that fundamentally transform both what is being measured and how the measurement occurs.

Principle 1: Implicit Measurement Over Explicit Self-Report

Traditional assessments, whether interviews, personality questionnaires, or situational judgment tests, rely on explicit self-report. They ask candidates to consciously describe, evaluate, or predict their own behaviour. “Tell me about a time you led a team through a difficult situation.” “Rate yourself on a scale of 1 to 5 on your ability to handle pressure.” “What would you do if a colleague disagreed with your approach?”

The problem with explicit self-report is well-documented across decades of psychology research. People are remarkably poor at accurately reporting their own behavioural tendencies. This is not because people are deliberately dishonest (though some are). It is because self-report is filtered through three powerful distortion mechanisms. First, social desirability: candidates consciously or unconsciously adjust their responses toward what they believe the employer wants to hear. Second, self-enhancement bias: most people genuinely overestimate their own capabilities, a phenomenon so robust that psychologists have named it the Dunning-Kruger effect when it occurs in relation to competence. Third, limited self-awareness: many behavioural patterns, particularly those related to decision-making speed, risk calibration, and stress responses, operate below conscious awareness, meaning candidates literally cannot report them accurately even when they try.

Gamified assessments use implicit measurement. Instead of asking candidates to describe how they make decisions, the assessment places them in interactive scenarios where they must actually make decisions. Instead of asking whether they are collaborative, the assessment observes how they behave when collaboration is required to advance through a scenario. The behavioural signals captured during gameplay emerge naturally, without the candidate consciously filtering their responses. Cognitive psychology research consistently demonstrates that implicit measures are more resistant to faking, more stable over time, and more predictive of actual real-world behaviour than explicit self-report measures.

Principle 2: Behavioural Observation at Micro-Second Resolution

When an experienced interviewer observes a candidate for 45 minutes, they capture perhaps 50 to 100 discrete behavioural observations, most of which are filtered through the interviewer’s own cognitive biases and attentional limitations. An interviewer cannot simultaneously track verbal content, vocal tone, facial expressions, body language, and their own questioning strategy with equal fidelity. Human attention is a bottleneck. Important signals are missed. Irrelevant signals are overweighted.

A well-designed gamified assessment captures thousands of data points per candidate in less time. EZYSS assessments, for example, capture over 3,000 behavioural data points per participant in approximately 25 minutes of gameplay. These data points extend far beyond what the candidate chose (the answer). They include how long the candidate deliberated before choosing (decision speed), whether decision speed changed as stakes increased (pressure response), how the candidate responded when an initial strategy failed (adaptive flexibility), how attention was allocated across competing demands (prioritization patterns), whether the candidate explored alternatives before committing (exploration versus exploitation tendency), and dozens of other micro-behavioural signals that are completely invisible to human observation but powerfully predictive of real-world performance.

This measurement density is what allows gamified assessments to achieve validity coefficients that rival or exceed cognitive ability tests while measuring a much broader range of competencies. Where a cognitive test measures processing speed and reasoning ability, a gamified assessment simultaneously captures decision-making quality, risk calibration, emotional regulation, strategic flexibility, collaborative orientation, and learning agility, all from the same 25-minute interaction.

Principle 3: Ecological Validity Through Dynamic Scenario Design

Ecological validity refers to how closely an assessment environment mirrors the real-world environment it is trying to predict performance in. Traditional personality questionnaires have extremely low ecological validity: answering Likert-scale questions in a quiet room on a computer screen bears no resemblance to the messy, dynamic, multi-demand reality of actual work. Even structured interviews have limited ecological validity, because describing past behaviour in a retrospective conversation is fundamentally different from displaying behaviour in real time under actual task demands.

Gamified assessments achieve significantly higher ecological validity through dynamic scenario design. A well-designed assessment module might require candidates to make sequential decisions under increasing time pressure while managing competing priorities, which precisely mirrors the cognitive demands of most knowledge work and management roles. Another module might require adaptive strategy adjustment when initial approaches produce diminishing returns, replicating the iterative problem-solving that characterizes effective performance in complex, changing environments. Yet another might present collaborative versus competitive scenarios where the candidate must calibrate how much to cooperate versus how much to pursue individual advantage, reflecting the interpersonal dynamics of team-based work.

The key insight from ecological validity research is that behaviour is profoundly context-dependent. People behave differently in different environments. A candidate who appears calm and strategic when describing past behaviour in an interview may become impulsive and reactive when placed in a scenario that actually creates time pressure and competing demands. The assessment that recreates the key features of the target work environment produces behaviour that is more representative of how the person will actually perform in that environment. This is why ecological validity is such a powerful driver of predictive accuracy.

Principle 4: Structural Resistance to Faking Through Task-Based Design

Faking is one of the most persistent and damaging challenges in personnel assessment. Research estimates that 30 to 50% of candidates engage in some form of response distortion on traditional personality questionnaires, with even higher rates for more desirable or competitive positions. The problem is not merely that some candidates are dishonest. It is that the assessment format makes honest responding irrational. When a personality questionnaire asks “I work well under pressure: strongly agree to strongly disagree,” the socially desirable answer is obvious. A candidate who answers honestly (“I sometimes struggle under pressure”) is punished relative to a candidate who fakes (“strongly agree”). The assessment format incentivizes distortion.

Gamified assessments are structurally resistant to faking because they are task-based rather than question-based. When a candidate is navigating an interactive scenario that requires real-time decisions, there is no obviously “correct” response to fake toward. The candidate does not know which of their hundreds of micro-decisions the assessment is measuring, or how those decisions map to competency scores. Their natural behavioural tendencies emerge through how they approach the task, not through how they describe themselves. Research comparing faking rates across assessment methods consistently shows that gamified and simulation-based assessments produce significantly lower response distortion than self-report questionnaires, which directly translates to more accurate measurement of actual capabilities.

Explore EZYSS Gamified Assessment for Evidence-Based Hiring

Why Interviews Fail: The Cognitive Bias Cascade That No Amount of Training Fully Eliminates

Interviews remain the most popular hiring method because they produce a strong subjective feeling of insight. After 30 to 60 minutes with a candidate, most interviewers feel confident in their evaluation. They believe they have seen enough to make a sound judgment. This confidence, however, bears almost no statistical relationship to accuracy. The gap between interviewer confidence and interview validity is one of the most robust and troubling findings in decades of personnel selection research.

The core problem is that interviews activate a cascade of cognitive biases that interviewers are largely unable to recognize or control, even with training:

- First impression anchoring: Research demonstrates that most interviewers form a preliminary evaluation within the first 4 to 10 minutes of an interview. The remaining 20 to 50 minutes are spent unconsciously seeking evidence that confirms this initial impression and discounting evidence that contradicts it. This is not a training issue. It is a fundamental feature of human cognition. Anchoring occurs automatically, below conscious awareness, and persists even when interviewers are explicitly warned about it.

- Similarity attraction bias: Interviewers systematically rate candidates who share their educational background, communication style, regional origin, social references, or career trajectory more favourably than equally qualified candidates who do not. This bias is particularly damaging in the Indian context, where educational institution hierarchies, linguistic backgrounds, and regional networks carry significant social weight in professional settings. The result is systematic exclusion of capable talent from non-traditional backgrounds.

- Halo and horn effects: A single impressive attribute, whether a prestigious degree, a well-known previous employer, or a confident speaking style, inflates ratings across all competency dimensions. Conversely, a single negative attribute, perhaps an unconventional career path or a moment of nervousness, depresses all ratings regardless of actual competency evidence. The interviewer’s overall impression bleeds into every individual competency rating, making the evaluation far less differentiated than it appears.

- Verbal fluency conflation: This bias is particularly consequential in India’s multilingual talent market. Interviewers routinely conflate verbal fluency, the ability to speak articulately and confidently in the interview language, with actual job competence. Candidates who are eloquent but lack deep capability are consistently rated higher than candidates who are highly capable but less verbally polished. For organizations interviewing in English, this bias systematically disadvantages candidates from vernacular-medium educational backgrounds, regardless of their actual skills, domain knowledge, or performance potential.

- Recency and primacy effects: When interviewing multiple candidates in a single day, interviewers disproportionately remember the first and last candidates while middle candidates blur together. This means that interview scheduling, which is entirely arbitrary from a competency standpoint, has a measurable impact on candidate evaluation. The candidate who happens to interview third out of eight is at a statistical disadvantage compared to the first or eighth candidate.

- Confirmation bias in reference to resumes: Interviewers who review a candidate’s resume before the interview form expectations that shape their questioning and interpretation. A candidate from a prestigious company receives benefit-of-the-doubt interpretations for ambiguous answers. A candidate from a lesser-known company faces sceptical follow-up questions for the same answers. The interview becomes a mechanism for confirming resume-based expectations rather than independently evaluating capability.

Structured interviews mitigate some of these biases through standardized questions, behavioural anchors, and trained interviewers. But they do not eliminate them. And in practice, few Indian organizations implement truly structured interviews consistently. The moment a hiring manager deviates from the script, asks an unscripted follow-up, or makes a holistic judgment based on “gut feeling,” the biases re-enter the evaluation unchecked.

Gamified assessments bypass these biases entirely. There is no interviewer to be biased. The candidate interacts with a standardized scenario. Data is captured automatically by the system. Scoring is algorithmic, applying the same criteria with the same precision to every candidate. This does not make gamified assessments perfect. But it makes them fundamentally more consistent, more scalable, and more resistant to the human cognitive limitations that systematically undermine interview accuracy.

How EZYSS Applies These Scientific Principles in Practice

EZYSS, developed by Able Ventures, is a gamified assessment platform that translates the four scientific principles into a practical, scalable hiring and talent assessment tool deployed across 300+ Indian organizations. Understanding how EZYSS operationalizes these principles illustrates what differentiates a scientifically grounded gamified assessment from superficial gamification that merely adds points, badges, and leaderboards to traditional questionnaires.

- Implicit behavioural capture: Each EZYSS module is a task-based interactive scenario, not a personality questionnaire with game visuals. Candidates engage with the scenario naturally, and their behavioural patterns are captured implicitly through their actions, response timing, strategy choices, error recovery approaches, and adaptive responses to changing scenario conditions.

- 3,000+ behavioural data points: Captured per participant across the assessment battery. This data density enables granular behavioural profiling that is simply impossible with methods capturing 50 to 100 data points. The difference is analogous to the difference between a standard photograph and a high-resolution microscope image. Both show the same subject, but the detail available for analysis is incomparably richer.

- 4 to 6 minutes per module, 25 minutes total: The full EZYSS battery completes in approximately 25 minutes, dramatically faster than traditional assessment centres (4 to 8 hours) while producing richer, more granular behavioural data. This compact completion time is critical for candidate experience and for practical deployment at scale.

- Language-independent design: EZYSS modules use visual cues, spatial reasoning, and interaction-driven mechanics rather than text-heavy questions. This architectural choice removes the language bias that plagues both interviews and traditional questionnaires in India’s multilingual talent market, ensuring that actual capability, not English fluency or verbal confidence, determines the evaluation.

- Cross-demographic fairness: Because EZYSS measures implicit behavioural patterns rather than self-reported traits or interview performance, it reduces the adverse impact concerns that affect some traditional assessment methods. Candidates from different educational, linguistic, socioeconomic, and regional backgrounds are evaluated on identical behavioural dimensions through identical standardized scenarios, producing evaluations that reflect capability rather than background.

The practical deployment model that produces the highest hiring accuracy combines EZYSS with structured interviews in a two-stage process. EZYSS provides objective, scalable screening across large candidate volumes, identifying the candidates whose behavioural profiles best match role requirements. Structured interviews then evaluate the shortlisted candidates on dimensions where trained human judgment adds genuine value: cultural fit assessment, interpersonal chemistry evaluation, motivation alignment, and the nuanced exploration of context-specific experiences. This combination, gamified assessment for capability screening followed by structured interviews for finalist evaluation, represents the current evidence-based best practice for maximizing hiring accuracy.

Traditional Assessment Versus Gamified Assessment: Dimension-by-Dimension Comparison

Dimension | Traditional (Interviews + Questionnaires) | Gamified Assessment (e.g., EZYSS) |

Measurement Approach | Explicit self-report; candidates consciously describe their capabilities and preferences | Implicit behavioural observation; candidates demonstrate capabilities through task performance without conscious filtering |

Data Points Per Candidate | 50 to 100 (interview) or 30 to 80 (questionnaire); filtered through human attention limitations | 3,000+ behavioural data points captured automatically at micro-second resolution |

Faking Resistance | Low to moderate; socially desirable responses are easy to identify and replicate; 30 to 50% response distortion rate | High; task-based design makes it difficult to determine which micro-decisions are being measured or scored |

Cognitive Bias Vulnerability | High; anchoring, halo effect, similarity attraction, and verbal fluency biases systematically distort evaluations | Low; standardized scenarios and algorithmic scoring eliminate human evaluator bias entirely |

Scalability | Low; each interview requires dedicated interviewer time; quality depends on individual interviewer skill | High; assessments deploy to thousands simultaneously with identical quality and consistency |

Candidate Experience | Often stressful; performance anxiety and social evaluation pressure reduce authentic behaviour display | Engaging; game-like format reduces evaluation anxiety, producing more natural and authentic behavioural data |

Language Dependence | High; verbal fluency in interview language strongly influences evaluation, disadvantaging non-native speakers | Low; visual and interaction-based design minimizes language barriers across India’s multilingual talent pool |

Completion Time | 30 to 60 minutes per interview; 4 to 8 hours for full assessment centre | Approximately 25 minutes for full battery; individual modules 4 to 6 minutes each |

Predictive Validity | 0.20 to 0.38 (unstructured) or 0.44 to 0.51 (structured) | 0.40 to 0.60+ for rigorously designed and validated gamified assessments |

Cost Efficiency at Scale | High per-candidate cost; assessment centres INR 8,000 to 25,000+; interviews carry substantial opportunity cost of senior interviewer time | Lower per-candidate at scale; digital delivery eliminates logistics costs; consistent quality without trained assessor dependency |

See How EZYSS Can Transform Your Hiring Accuracy and Efficiency

Beyond Hiring: How Gamified Assessment Science Strengthens the Entire Talent Lifecycle

While the hiring use case is where most organizations first encounter gamified assessments, the science has applications that extend across the entire talent management lifecycle. The same behavioural data that predicts job performance also informs and strengthens multiple downstream talent processes, creating compounding value from a single assessment investment.

- High-potential identification: Gamified assessment data objectively identifies individuals whose behavioural patterns indicate capacity for significantly larger or more complex roles, independent of manager advocacy, political visibility, or tenure-based assumptions. This evidence-based identification transforms talent management strategy from the opinion-driven process it is in most organizations to a data-driven system that surfaces talent the organization might otherwise overlook.

- Precision development targeting: When assessment data reveals that a high-potential leader demonstrates strong strategic thinking but lower collaborative decision-making, the corporate training programme and learning journeys designed for that individual can target the specific gap rather than delivering generic leadership content. This precision is what produces behaviour change rates of 60 to 75% versus below 15% for untargeted training.

- Evidence-based succession calibration: Assessment data provides the objective readiness evidence that credible succession planning requires. Pipeline conversations shift from subjective impressions (“we think she is ready”) to evidence-based analysis (“her assessment shows strong strategic capability and improving stakeholder management, with a specific gap in financial acumen that a targeted professional development programme can close within six months”). This specificity makes succession planning actionable rather than aspirational.

- Culture diagnostic intelligence: Aggregate assessment data across teams, functions, and leadership levels reveals collective behavioural patterns that inform culture transformation initiatives. If assessment data consistently shows risk aversion across the leadership population, that is a cultural pattern with direct strategic implications. The Culture NXT framework can then address this pattern systematically through diagnosis, vision alignment, and targeted intervention.

- Leadership development ROI measurement: By deploying gamified assessments before and after leadership development programmes, organizations can objectively measure behavioural change and programme ROI with precision that participant satisfaction surveys and self-reported learning assessments cannot approach. This measurement capability transforms L&D from a faith-based investment to a data-driven strategic capability.

Building the Business Case: What Indian TA Leaders Should Present to the C-Suite

For talent acquisition leaders seeking to introduce gamified assessments into their organizations, the business case rests on four pillars that resonate with financial and operational decision-makers in the C-suite.

- Hiring accuracy and mis-hire cost reduction: Even a modest improvement in hiring accuracy, moving from a validity coefficient of 0.30 to 0.50, produces substantial financial returns. For an organization making 200 hires per year, where the average cost of a mis-hire (recruitment costs, onboarding investment, lost productivity during the mis-hire period, disruption costs, and replacement recruitment) runs to approximately INR 6 lakhs, improving hiring accuracy by 20% translates to INR 24 lakhs in annual savings. For larger organizations making 500+ hires annually, the savings scale into crores, and the calculation does not even account for the harder-to-quantify costs of team disruption and morale impact from mis-hires.

- Time and operational efficiency: Gamified assessments can objectively screen 500 candidates in the time it takes to schedule and conduct 10 interviews. This efficiency gain is transformative for organizations dealing with high-volume hiring scenarios: campus recruitment drives, bulk frontline hiring, or rapid scaling where interview-based screening is simply impractical. The time savings extend beyond the assessment itself to include reduced scheduling coordination, elimination of interviewer preparation time, and faster pipeline progression.

- Diversity, fairness, and expanded talent access: By removing the language biases, interviewer biases, and socioeconomic background biases inherent in traditional assessment methods, gamified assessments help organizations access talent pools they have been systematically overlooking. This is not merely a diversity, equity, and inclusion argument, though it serves that purpose powerfully. It is a competitive advantage argument: the most capable candidate for any given role might be someone whose verbal presentation skills, educational pedigree, or social network would have caused them to be screened out by conventional hiring methods.

- Employer brand and candidate experience enhancement: In India’s increasingly competitive talent market, the candidate experience during the hiring process directly affects employer brand perception, offer acceptance rates, and word-of-mouth reputation. Gamified assessments consistently produce higher candidate satisfaction scores than traditional assessment batteries. Candidates perceive them as modern, technologically sophisticated, engaging, and respectful of their time, all attributes that strengthen the employer’s talent attraction capability and differentiate the organization in a crowded recruitment market.

Discuss EZYSS Implementation for Your Organization

Smita Dinesh

Frequently Asked Questions

Yes, well-designed gamified assessments are built on established psychometric principles and undergo rigorous validation studies. The critical distinction is between genuinely scientific gamified assessments, which are designed by organizational psychologists, validated against actual job performance criteria, and continuously refined based on accumulating data, and superficial gamification, which merely adds game elements (points, badges, animations) to traditional questionnaires without changing the underlying measurement science. EZYSS has validated assessment science embedded into the gameplay mechanics, ensuring that the engaging format serves the measurement goals rather than merely decorating them.

No, and they should not. The evidence-based optimal approach uses gamified assessments and structured interviews in combination, each playing to its measurement strengths. Gamified assessments are most effective as an early-stage, scalable screening tool that objectively identifies candidates whose behavioural profiles match role requirements. Structured interviews then evaluate shortlisted candidates on dimensions where trained human judgment adds genuine value: cultural fit, interpersonal chemistry, motivation alignment, and the nuanced evaluation of context-specific experiences. Together, this two-stage combination produces significantly higher overall hiring accuracy than either method deployed alone.

This is a common concern, and the data addresses it decisively. Gamified assessments are not casual games. They are carefully designed interactive tasks that require focused attention, strategic decision-making, and genuine cognitive effort. Candidates consistently report finding gamified assessments more engaging, more interesting, and more respectful of their time than traditional questionnaire batteries or lengthy assessment centre exercises. Completion rates for gamified assessments are significantly higher than for traditional assessments, which is direct behavioural evidence that candidates engage seriously and persist through the full evaluation.

Absolutely. The behavioural competencies that matter most at senior levels, such as strategic thinking under uncertainty, risk calibration, cognitive flexibility, adaptive leadership, and complex decision-making, are precisely the competencies that gamified assessments measure most effectively through their implicit, task-based design. The implicit measurement approach is particularly valuable at senior levels, where candidates are especially skilled at managing their self-presentation in interviews and questionnaires. EZYSS has been successfully deployed for leadership pipeline identification, succession planning calibration, and senior executive hiring across a wide range of Indian organizations.

EZYSS is designed to be intuitive and accessible, requiring no complex instructions, gaming knowledge, or prior experience with digital games. The visual, interaction-driven design means candidates do not need advanced technology skills or familiarity with gaming conventions. In practice, organizations find that candidates across all age groups and technology comfort levels complete the assessments without difficulty. The assessments measure behavioural patterns and cognitive processes, not gaming proficiency or digital dexterity.

Well-designed gamified assessments can measure a broad and growing range of behavioural and cognitive competencies, including decision-making quality and speed, risk calibration and tolerance, strategic thinking and forward planning, attention management and task prioritization, cognitive flexibility and adaptability, collaborative versus competitive orientation, emotional regulation under pressure, learning agility and feedback responsiveness, persistence and resilience under setbacks, and information processing patterns. The specific competencies measured depend on the design of each assessment module.

Implementation is relatively straightforward. EZYSS can be deployed within weeks once the organization has defined the target competency profiles for the roles being assessed. The setup process involves mapping role requirements to assessment modules, configuring reporting dashboards and score interpretation guidelines, and training the talent acquisition team on integrating assessment reports into their decision-making process. Most organizations are running live assessments within 3 to 4 weeks of initial engagement.

The cost comparison strongly favours gamified assessments at scale. While the per-assessment unit cost varies by volume and configuration, the total cost of assessment per successful hire is typically significantly lower than traditional methods when you account for interviewer time saved, reduction in costly mis-hires, faster time-to-hire reducing vacancy costs, and elimination of expensive in-person assessment centre logistics (assessor fees, venue costs, candidate travel). For organizations making 100+ hires per year, the return on investment is typically positive within the first year of implementation.

Most well-designed gamified assessment platforms offer integration capabilities with common applicant tracking systems and HRIS platforms. Assessment results can be exported in standard formats and incorporated into the organization’s broader talent data ecosystem. This integration is important for ensuring that assessment data informs not just the initial hiring decision but also downstream talent management processes including onboarding, development planning, performance management, and succession calibration.

Gamified assessments advance diversity by structurally removing several bias sources that disadvantage underrepresented candidates in traditional processes. Language-independent design levels the playing field for candidates from diverse linguistic backgrounds. Algorithmic scoring eliminates interviewer biases related to gender, age, physical appearance, accent, and socioeconomic markers. Task-based measurement evaluates what candidates can actually do, not how they present themselves verbally or what professional networks they have access to. The result is a more meritocratic evaluation process that helps organizations access the full breadth of available talent rather than the narrow segment that traditional methods surface.

Recent Blogs

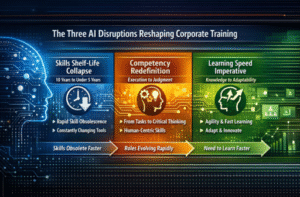

Corporate Training in the Age of AI: What Indian L&D Teams Must Rethink in 2026

Artificial intelligence is not coming to Indian workplaces. It is already here. It is embedded in customer

How to Design a Talent Management Strategy That Aligns People Growth with Business Goals

Here is a question that separates organizations that grow sustainably from those that grow chaotically: does your

Why Culture NXT Is the Framework Indian Organizations Need to Survive the Next Decade of Disruption

There is a hard truth that most Indian boardrooms are not ready to hear. The organizations that